A beginner enters a new competitive system and wins. The feeling that follows is rarely interpreted as a probabilistic event — a natural outcome of variance distributing some positive results alongside negative ones at the start of any new engagement. Instead, it registers as something more significant: confirmation. The early win feels like the system recognizing something real about the person who just participated. It feels, in other words, like evidence.

Understanding why early success feels like evidence requires looking at the specific cognitive mechanisms that transform a statistically unremarkable early result into a subjectively compelling signal about personal capability — and why that transformation produces some of the most consequential errors in judgment that competitive environments generate.

The Pattern Recognition Problem

The foundational mechanism is one of the oldest in cognitive psychology: the human mind is built to find patterns. This is not a flaw — it is a deeply adaptive feature. In most environments across most of human evolutionary history, the ability to quickly identify patterns in sensory data was genuinely useful. A repeated noise pattern indicated a predator. A repeated growth pattern indicated a food source. Acting on early pattern signals — before the full data set was available — was often safer than waiting for statistical certainty.

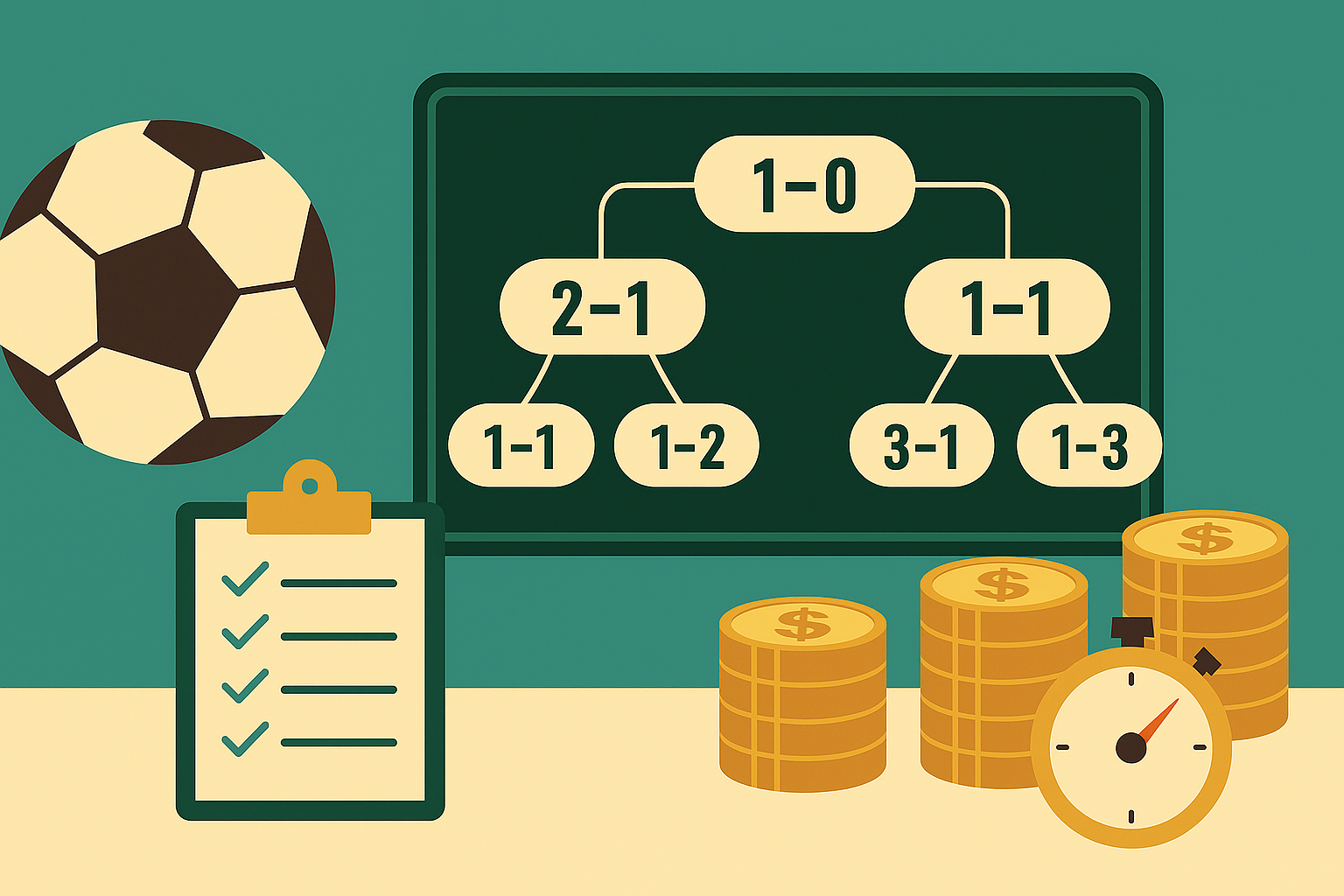

In competitive systems governed by probability and variance, this same pattern-recognition instinct becomes a liability. A run of early wins does not, in most cases, represent a meaningful pattern. It represents variance — the natural clustering of outcomes that probability produces even in sequences that are entirely random. But the mind that evolved to detect real patterns does not automatically distinguish between patterns produced by underlying structure and clusters produced by chance. Both feel the same. Both trigger the same interpretive response: something is happening here that reflects a real underlying reality.

Research on the hot hand fallacy — first documented systematically by Gilovich, Vallone, and Tversky in 1985 — demonstrates this tendency with particular clarity. The study found that 95% of basketball fans surveyed believed that a player who had made several consecutive shots had a genuinely higher probability of making the next one, despite statistical analysis showing that each shot’s outcome was independent of previous ones. The subjective experience of a streak is identical whether the streak reflects genuine skill elevation or random clustering. The mind cannot tell the difference from the inside.

Self-Attribution and the Interpretation of Early Wins

Pattern recognition explains why early success feels meaningful. Self-attribution bias explains why it feels personal. As discussed in analyses of why early wins lead to misjudgment about short-term results, the tendency to attribute positive outcomes to internal factors — skill, judgment, superior insight — while attributing negative outcomes to external factors is one of the most consistently documented patterns in behavioral research.

For a beginner experiencing early wins, this bias operates without the corrective knowledge that experience provides. An experienced participant who wins can calibrate their interpretation: they know the variance of the system, they understand the role of luck in individual outcomes, and they have a baseline expectation of win rates that allows them to contextualize a run of positive results. A beginner has none of this calibration. The early wins arrive without context, and the most available interpretation — the one that self-attribution bias makes automatic — is that the wins reflect genuine personal capability.

This attribution feels not just comfortable but correct, because the beginner has no empirical basis for doubting it. They have won. Winning is a positive outcome. Positive outcomes feel like confirmation. The logical structure of the inference — “I won, therefore I am good at this” — seems compelling precisely because it follows the form of real evidence, even when the sample size is too small to support the conclusion.

The Overconfidence Cascade

The consequences of treating early success as evidence extend beyond the initial misattribution. Once early wins are interpreted as confirmation of genuine capability, a cascade of secondary effects follows that compounds the original error.

Confidence rises disproportionately to the evidence that actually supports it. Research on overconfidence consistently shows that confidence and accuracy diverge most sharply when individuals are making judgments in domains where they have limited experience — exactly the condition that early success in a new system creates. In one classic experiment, participants’ confidence in their judgments increased from 33% to 53% as they received more information about a problem, while their actual accuracy remained below 30%. More information — or in the context of competitive systems, more early wins — produced more confidence without producing more accuracy.

This divergence has a specific practical consequence: the beginner who interprets early wins as evidence of skill tends to increase their stakes, expand their activity, and reduce their caution — at precisely the point in their development when the opposite approach would serve them best. The early wins that felt like evidence were actually the most dangerous period of the learning curve, because they created confidence without the underlying competence that should accompany it.

Small Samples and the Illusion of Certainty

The statistical reality that early success obscures is straightforward: small sample sizes cannot support strong inferences. The outcome of three or five or even ten early interactions with a competitive system tells a participant very little about their underlying capability relative to that system. The natural variance of most competitive environments is large enough that a run of positive early results is consistent with a wide range of underlying skill levels — including no particular skill advantage at all.

This is the core of what makes early success so cognitively dangerous. It generates the subjective experience of certainty — the feeling of having learned something real about oneself and the system — from a data set that cannot actually support that certainty. The feeling of certainty is real. The evidence behind it is not.

Experienced participants in any competitive system know this. They have seen enough variance across enough outcomes to understand that early results are the least reliable information the system provides. The beginner, by definition, has not yet accumulated the experience that would allow them to calibrate their interpretation of early wins against the full distribution of what variance actually produces.

What Early Success Actually Tells You

Early success, interpreted carefully, provides one piece of genuinely useful information: the system is not so difficult or unfavorable that positive results are structurally impossible. That is a meaningful data point. It is not, however, confirmation of skill, confirmation of a winning system, or confirmation that the results will continue.

The most productive interpretation of early wins is precisely the one that feels least satisfying: these results are drawn from a small sample in a high-variance environment, and the appropriate response is curiosity and continued observation — not confidence and increased commitment. That interpretive discipline is difficult to maintain against the pull of self-attribution bias and pattern recognition. But it is the discipline that separates participants who learn from competitive systems from those who are misled by them.

Early success is a data point. Only time and sample size can tell you what it actually means.